State of the Art on Neural Rendering

Ayush Tewari1 Ohad Fried2 Justus Thies3 Vincent Sitzmann4 Stephen Lombardi5 Kalyan Sunkavalli6 Ricardo Martin-Brualla7 Tomas Simon5 Jason Saragih5 Matthias Nießner3 Rohit K Pandey7 Sean Fanello8 Gordon Wetzstein2 Jun-Yan Zhu9 Christian Theobalt1 Maneesh Agrawala2 Eli Shechtman6 Dan B Goldman7 Michael Zollhöfer10

1Max Planck Institute for Informatics 2Stanford University 3Technical University of Munich 4MIT EECS 5Facebook 6Adobe Research 7Google 8Microsoft Research 9MIT 10Meta

EUROGRAPHICS 2020

Abstract

Efficient rendering of photo-realistic virtual worlds is a long standing effort of computer graphics. Modern graphics techniques have succeeded in synthesizing photo-realistic images from hand-crafted scene representations.

However, the automatic generation of shape, materials, lighting, and other aspects of scenes remains a challenging problem that, if solved, would make photo-realistic computer graphics more widely accessible.

Concurrently, progress in computer vision and machine learning have given rise to a new approach to image synthesis and editing, namely deep generative models.

Neural rendering is a new and rapidly emerging field that combines generative machine learning techniques with physical knowledge from computer graphics, e.g., by the integration of differentiable rendering into network training.

With a plethora of applications in computer graphics and vision, neural rendering is poised to become a new area in the graphics community, yet no survey of this emerging field exists.

This state-of-the-art report summarizes the recent trends and applications of neural rendering.

We focus on approaches that combine classic computer graphics techniques with deep generative models to obtain controllable and photo-realistic outputs.

Starting with an overview of the underlying computer graphics and machine learning concepts, we discuss critical aspects of neural rendering approaches.

Specifically, our emphasis is on the type of control, i.e., how the control is provided, which parts of the pipeline are learned, explicit vs.~implicit control, generalization, and stochastic vs.~deterministic synthesis.

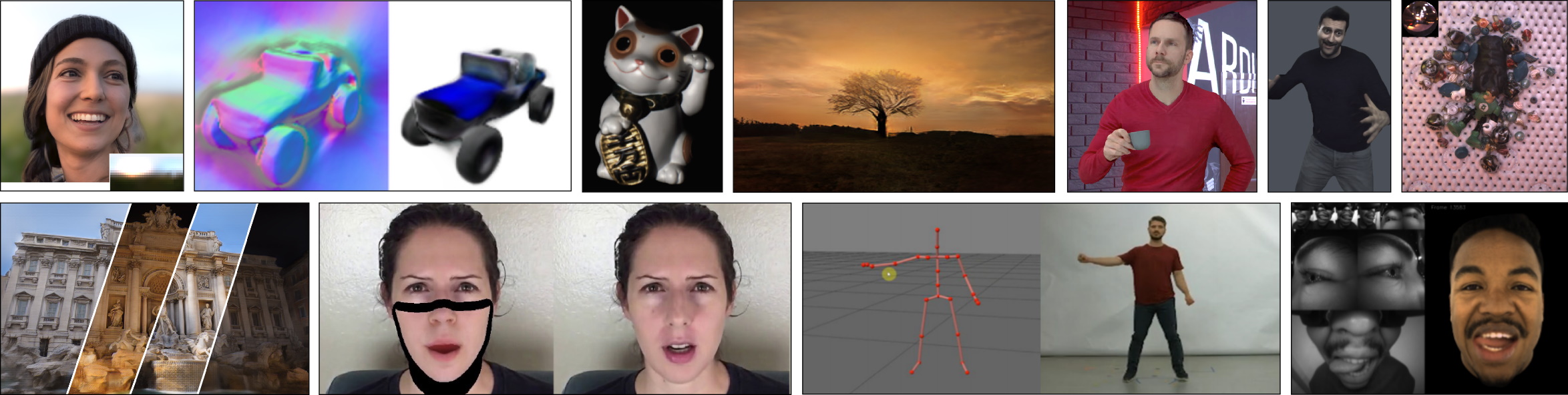

The second half of this state-of-the-art report is focused on the many important use cases for the described algorithms such as novel view synthesis, semantic photo manipulation, facial and body reenactment, relighting, free-viewpoint video, and the creation of photo-realistic avatars for virtual and augmented reality telepresence.

Finally, we conclude with a discussion of the social implications of such technology and investigate open research problems.

Bibtex

Bibtex